Project Info

Reconstructing an image from its local descriptors

Reconstructing an image from its local descriptors

Authors. Weinzaepfel Philippe; Jégou Hervé ; Pérez Patrick

Conference details. Computer Vision and Pattern Recognition, June 2011, Colorado Springs, United States.

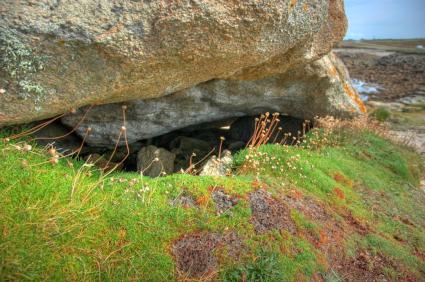

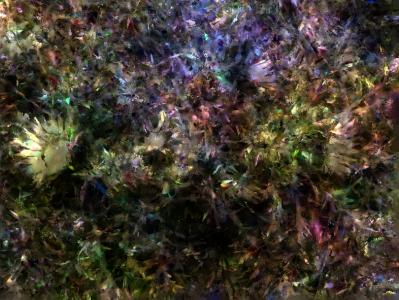

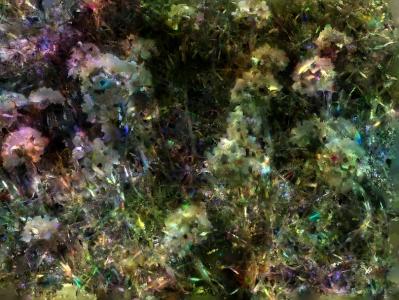

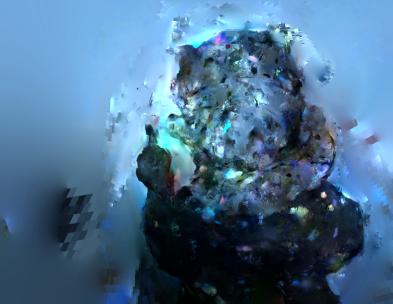

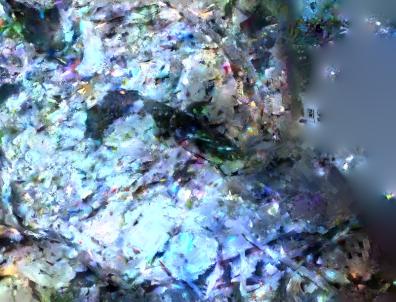

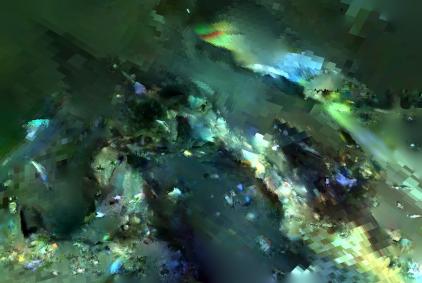

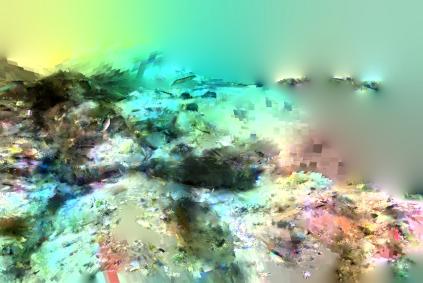

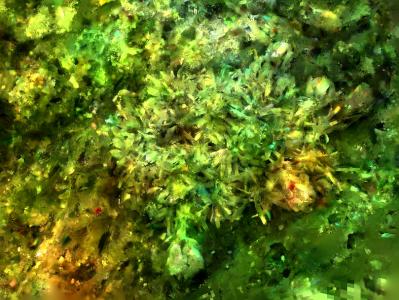

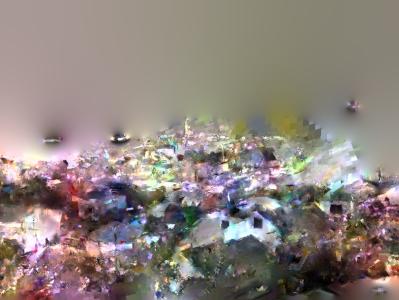

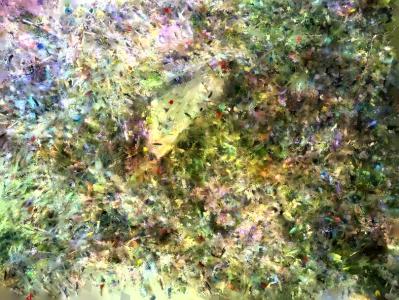

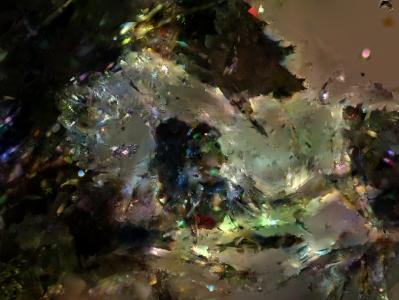

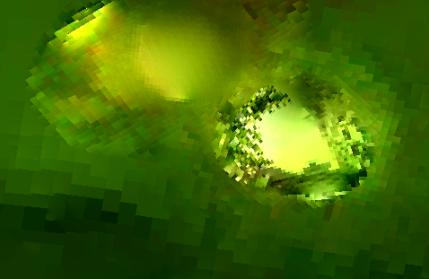

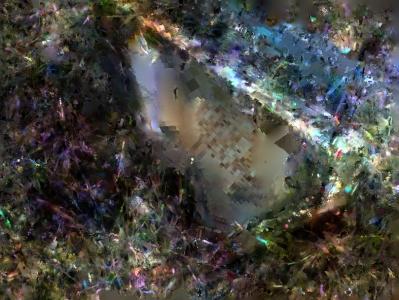

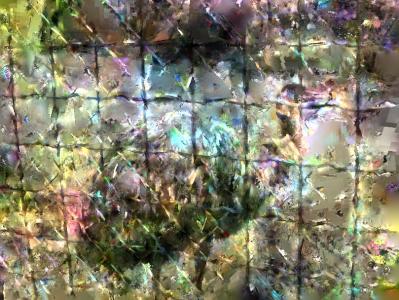

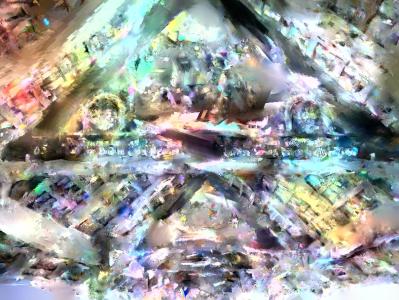

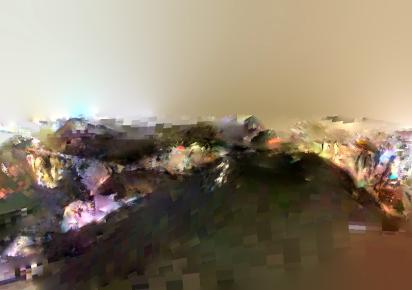

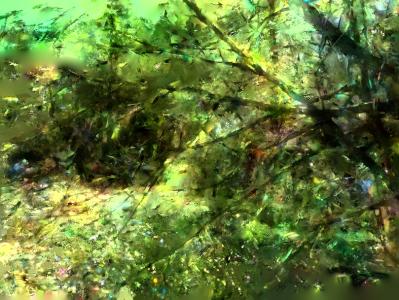

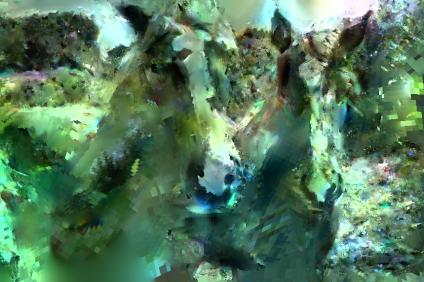

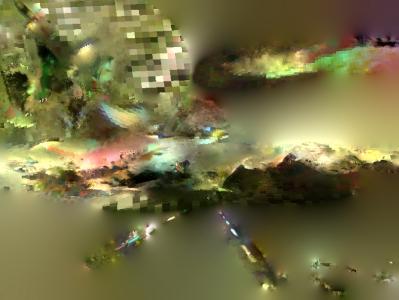

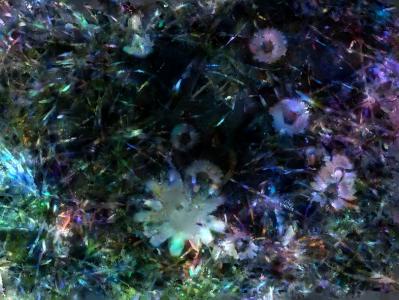

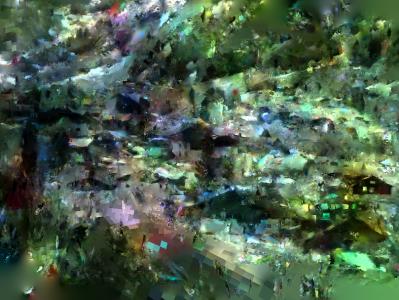

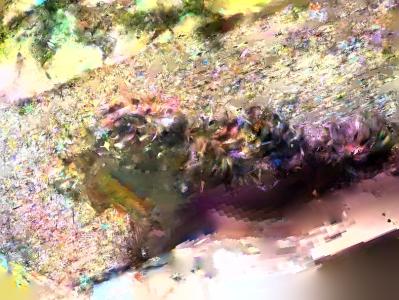

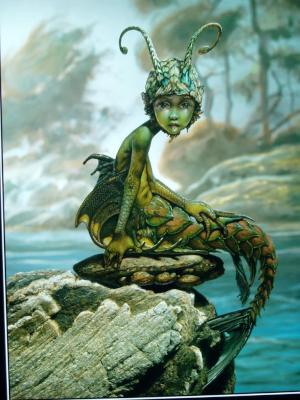

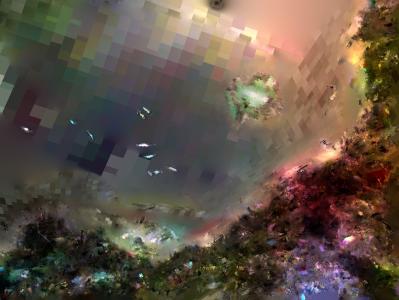

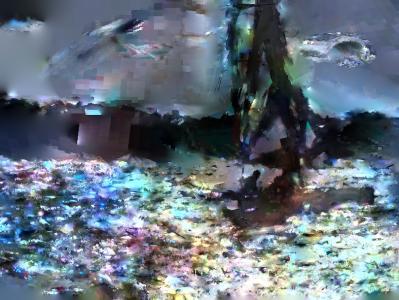

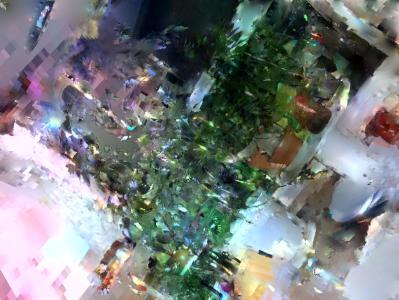

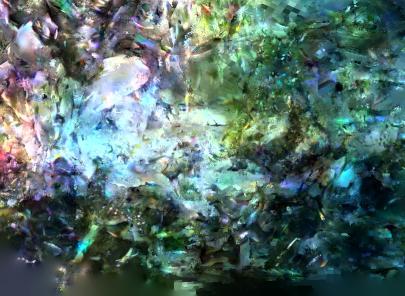

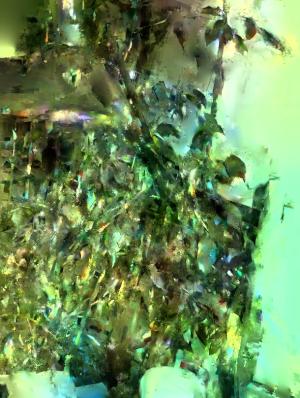

Summary This paper shows that an image can be approximately reconstructed based on the output of a blackbox local description software such as those classically used for image indexing. Our approach consists first in using an off-the-shelf image database to find patches which are visually similar to each region of interest of the unknown input image, according to associated local descriptors. These patches are then warped into input image domain according to interest region geometry and seamlessly stitched together. Final completion of still missing texture-free regions is obtained by smooth interpolation. As demonstrated in our experiments, visually meaningful reconstructions are obtained just based on image local descriptors like SIFT, provided the geometry of regions of interest is known. The reconstruction allows most often the clear interpretation of the semantic image content. As a result, this work raises critical issues of privacy and rights when local descriptors of photos or videos are given away for indexing and search purpose.

or

or