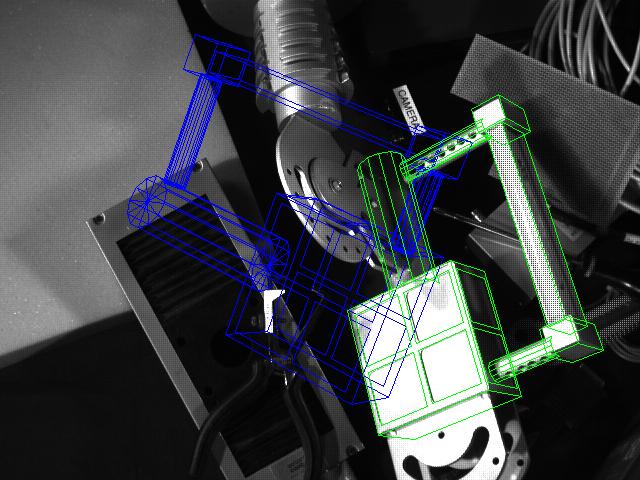

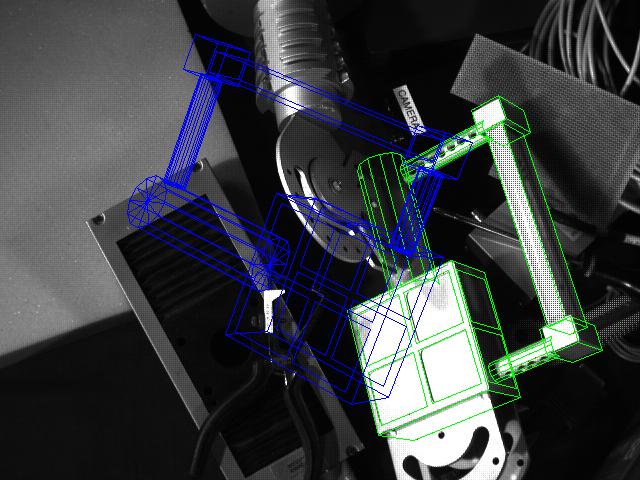

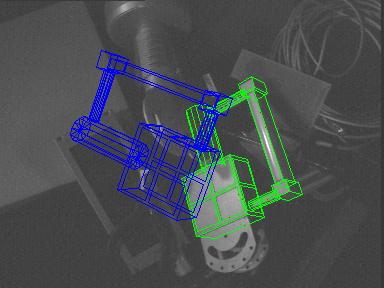

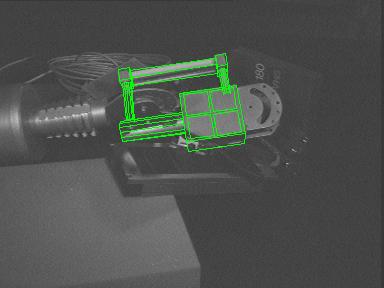

This page presents a real-time, robust and efficient 3D model-based tracking algorithm for visual servoing. A virtual visual servoing approach is used for monocular 3D tracking. This method is similar to more classical non-linear pose computation techniques. Robustness is obtained by integrating a M-estimator into the virtual visual control law via an iteratively re-weighted least squares implementation.

Results of the virtual visual servoing tracker are presented in Real-time 3D localisation and tracking page.

In this page we present results of the extension of this approach to the use of multiple cameras. Results show the method to be robust to occlusion, changes in illumination and miss-tracking.